A Principled AI Discussion in Asilomar

Contents

The Asilomar Conference took place against a backdrop of growing interest from wider society in the potential of artificial intelligence (AI), and a sense that those playing a part in its development have a responsibility and opportunity to shape it for the best. The purpose of the Conference, which brought together leaders from academia and industry, was to discern the AI community’s shared vision for AI, should it exist.

In planning the meeting, we reviewed reports on the opportunities and threats created by AI and compiled a long list of the diverse views held on how the technology should be managed. We then attempted to distill this list into a set of principles by identifying areas of overlap and potential simplification. Before the conference, we extensively surveyed attendees, gathering suggestions for improvements and additional principles. These responses were folded into a significantly revised list for use at the meeting.

In Asilomar, we gathered further feedback in two stages. To begin with, small breakout groups discussed the principles and produced detailed feedback. This process generated several new principles, improved versions of the existing principles and, in several cases, multiple competing versions of a single principle. Finally, we surveyed the full set of attendees to determine the level of support for each version of each principle.

The final list consisted of 23 principles, each of which received support from at least 90% of the conference participants. These “Asilomar Principles” have since become one of the most influential sets of governance principles, and serve to guide our work on AI.

To start the discussion, here are some of the things other AI researchers who signed the Principles had to say about them.

Value Alignment: Highly autonomous AI systems should be designed so that their goals and behaviors can be assured to align with human values throughout their operation.

“Value alignment is a big one. Robots aren’t going to try to revolt against humanity, but they’ll just try to optimize whatever we tell them to do. So we need to make sure to tell them to optimize for the world we actually want.”

-Anca Dragan, Assistant Professor in the EECS Department at UC Berkeley, and co-PI for the Center for Human Compatible AI

Read her complete interview here.

Shared Prosperity

“I consider that one of the greatest dangers is that people either deal with AI in an irresponsible way or maliciously — I mean for their personal gain. And by having a more egalitarian society, throughout the world, I think we can reduce those dangers. In a society where there’s a lot of violence, a lot of inequality, the risk of misusing AI or having people use it irresponsibly in general is much greater. Making AI beneficial for all is very central to the safety question.”

-Yoshua Bengio, Professor of CSOR at the University of Montreal, and head of the Montreal Institute for Learning Algorithms (MILA)

Read his complete interview here.

Importance: Advanced AI could represent a profound change in the history of life on Earth, and should be planned for and managed with commensurate care and resources.

“I believe that AI will create profound change even before it is ‘advanced’ and thus we need to plan and manage growth of the technology. As humans we are not good at long-term planning because our civil systems don’t encourage it, however, this is an area in which we must develop our abilities to ensure a responsible and beneficial partnership between man and machine.”

-Kay Firth-Butterfield, Executive Director of AI-Austin.org, and an adjunct Professor of Law at the University of Texas at Austin

Read her complete interview here.

Personal Privacy: People should have the right to access, manage and control the data they generate, given AI systems’ power to analyze and utilize that data.

“It’s absolutely crucial that individuals should have the right to manage access to the data they generate… AI does open new insight to individuals and institutions. It creates a persona for the individual or institution – personality traits, emotional make-up, lots of the things we learn when we meet each other. AI will do that too and it’s very personal. I want to control how persona is created. A persona is a fundamental right.”

-Guruduth Banavar, VP, IBM Research, Chief Science Officer, Cognitive Computing

Read his complete interview here.

Value Alignment: Highly autonomous AI systems should be designed so that their goals and behaviors can be assured to align with human values throughout their operation.

“The one closest to my heart. … AI systems should behave in a way that is aligned with human values. But actually, I would be even more general than what you’ve written in this principle. Because this principle has to do not only with autonomous AI systems, but I think this is very important and essential also for systems that work tightly with humans in the loop, and also where the human is the final decision maker. Because when you have human and machine tightly working together, you want this to be a real team. So you want the human to be really sure that the AI system works with values aligned to that person. It takes a lot of discussion to understand those values.”

-Francesca Rossi, Research scientist at the IBM T.J. Watson Research Centre, and a professor of computer science at the University of Padova, Italy, currently on leave

Read her complete interview here.

AI Arms Race: An arms race in lethal autonomous weapons should be avoided.

“One reason that I got involved in these discussions is that there are some topics I think are very relevant today, and one of them is the arms race that’s happening amongst militaries around the world already, today. This is going to be very destabilizing. It’s going to upset the current world order when people get their hands on these sorts of technologies. It’s actually stupid AI that they’re going to be fielding in this arms race to begin with and that’s actually quite worrying – that it’s technologies that aren’t going to be able to distinguish between combatants and civilians, and aren’t able to act in accordance with international humanitarian law, and will be used by despots and terrorists and hacked to behave in ways that are completely undesirable. And that’s something that’s happening today. You have to see the recent segment on 60 Minutes to see the terrifying swarms of robot UAVs that the American military is now experimenting with.”

-Toby Walsh, Guest Professor at Technical University of Berlin, Professor of Artificial Intelligence at the University of New South Wales, and leads the Algorithmic Decision Theory group at Data61, Australia’s Centre of Excellence for ICT Research

Read his complete interview here.

AI Arms Race: An arms race in lethal autonomous weapons should be avoided.

“I’m not a fan of wars, and I think it could be extremely dangerous. Obviously I think that the technology has a huge potential, and even just with the capabilities we have today it’s not hard to imagine how it could be used in very harmful ways. I don’t want my contributions to the field and any kind of techniques that we’re all developing to do harm to other humans or to develop weapons or to start wars or to be even more deadly than what we already have.”

-Stefano Ermon, Assistant Professor in the Department of Computer Science at Stanford University, where he is affiliated with the Artificial Intelligence Laboratory

Read his complete interview here.

Capability Caution: There being no consensus, we should avoid strong assumptions regarding upper limits on future AI capabilities.

“I agree! As a scientist, I’m against making strong or unjustified assumptions about anything, so of course I agree. Yet this principle bothers me … because it seems to be implicitly saying that there is an immediate danger that AI is going to become superhumanly, generally intelligent very soon, and we need to worry about this issue. This assertion … concerns me because I think it’s a distraction from what are likely to be much bigger, more important, more near term, potentially devastating problems. I’m much more worried about job loss and the need for some kind of guaranteed health-care, education and basic income than I am about Skynet. And I’m much more worried about some terrorist taking an AI system and trying to program it to kill all Americans than I am about an AI system suddenly waking up and deciding that it should do that on its own.”

-Dan Weld, Professor of Computer Science & Engineering and Entrepreneurial Faculty Fellow at the University of Washington

Read his complete interview here.

Capability Caution: There being no consensus, we should avoid strong assumptions regarding upper limits on future AI capabilities.

“In many areas of computer science, such as complexity or cryptography, the default assumption is that we deal with the worst case scenario. Similarly, in AI Safety, we should assume that AI will become maximally capable and prepare accordingly. If we are wrong, we will still be great shape.”

-Roman Yampolskiy, Associate Professor of CECS at the University of Louisville, and founding director of the Cyber Security Lab

About the Future of Life Institute

The Future of Life Institute (FLI) is the world’s oldest and largest AI think tank, with a team of 35+ full-time staff operating across the US and Europe. FLI has been working to steer the development of transformative technologies towards benefitting life and away from extreme large-scale risks since its founding in 2014. Find out more about our mission or explore our work.

Related content

Other posts about AI, AI Safety Principles

Governor DeSantis Directs Florida State Agencies to Partner with Future of Life Institute to Shield Families from AI Harm

Statement from Max Tegmark on the Department of War’s ultimatum

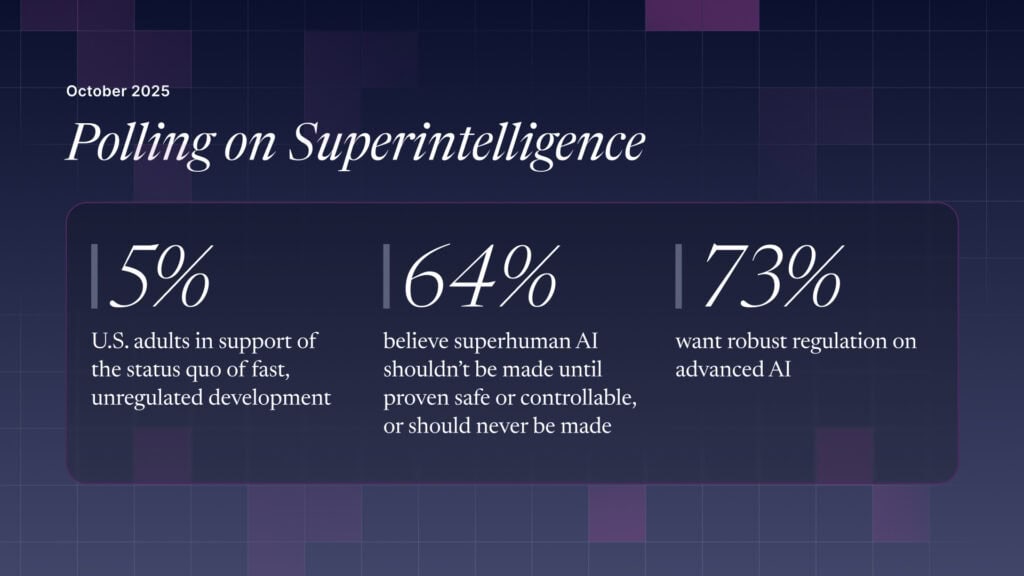

The U.S. Public Wants Regulation (or Prohibition) of Expert‑Level and Superhuman AI