Communications

The educational and communications wing of the FLI team.

Introduction

Informing the discourse around our focus areas.

The outreach team works to improve awareness, provide accurate and accessible information, and deepen understanding of our focus areas. Using evidence-based strategies of risk communication, we seek to emphasise positive steps by which extreme risks from transformative technologies can be reduced, and global prospects enhanced.

To these ends, we create informative content, operate dynamic social media accounts, collaborate with journalists, and run the Future of Life Award, celebrating unsung heroic individual efforts which made our world a better, safer place.

To these ends, we create informative content, operate dynamic social media accounts, collaborate with journalists, and run the Future of Life Award, celebrating unsung heroic individual efforts which made our world a better, safer place.

Our work

Outreach projects

Learn more about our ongoing projects:

The Pro-Human AI Declaration

A remarkable bipartisan coalition of leading organizations and prominent figures have announced their support for a set of Pro-Human principles to guide our shared future with AI.

Protect What’s Human

We’re building a pro-human movement for commonsense regulation to keep AI safe and under our control, kicking off with a national ad campaign airing in the US.

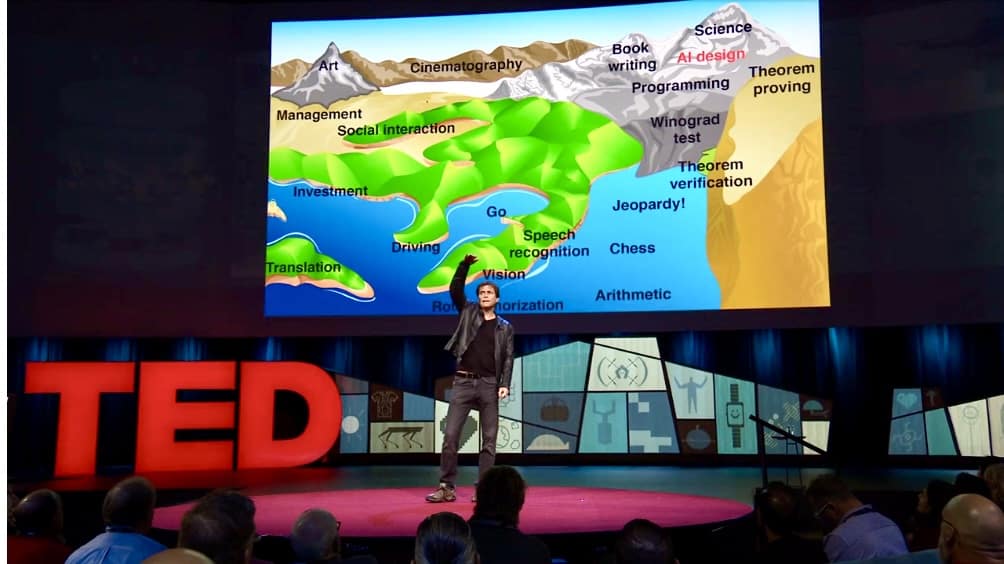

Control Inversion

Why the superintelligent AI agents we are racing to create would absorb power, not grant it | The latest study from Anthony Aguirre.

Statement on Superintelligence

A stunningly broad coalition has come out against unsafe superintelligence: AI researchers, faith leaders, business pioneers, policymakers, National Security staff, and actors stand together.

Creative Contest: Keep The Future Human

$100,000+ in prizes for creative digital media that engages with the essay's key ideas, helps them to reach a wider range of people, and motivates action in the real world.

Digital Media Accelerator

The Digital Media Accelerator supports digital content from creators raising awareness and understanding about ongoing AI developments and issues.

Keep The Future Human

Why and how we should close the gates to AGI and superintelligence, and what we should build instead | A new essay by Anthony Aguirre, Executive Director of FLI.

Multistakeholder Engagement for Safe and Prosperous AI

FLI is launching new grants to educate and engage stakeholder groups, as well as the general public, in the movement for safe, secure and beneficial AI.

Superintelligence Imagined Creative Contest

A contest for the best creative educational materials on superintelligence, its associated risks, and the implications of this technology for our world. 5 prizes at $10,000 each.

The Elders Letter on Existential Threats

The Elders, the Future of Life Institute and a diverse range of preeminent public figures are calling on world leaders to urgently address the ongoing harms and escalating risks of the climate crisis, pandemics, nuclear weapons, and ungoverned AI.

Combatting Deepfakes

As part of a growing coalition of concerned organizations, FLI is calling on lawmakers to take meaningful steps to disrupt the AI-driven deepfake supply chain.

Educating about Autonomous Weapons

Military AI applications are rapidly expanding. We develop educational materials about how certain narrow classes of AI-powered weapons can harm national security and destabilize civilization, notably weapons where kill decisions are fully delegated to algorithms.

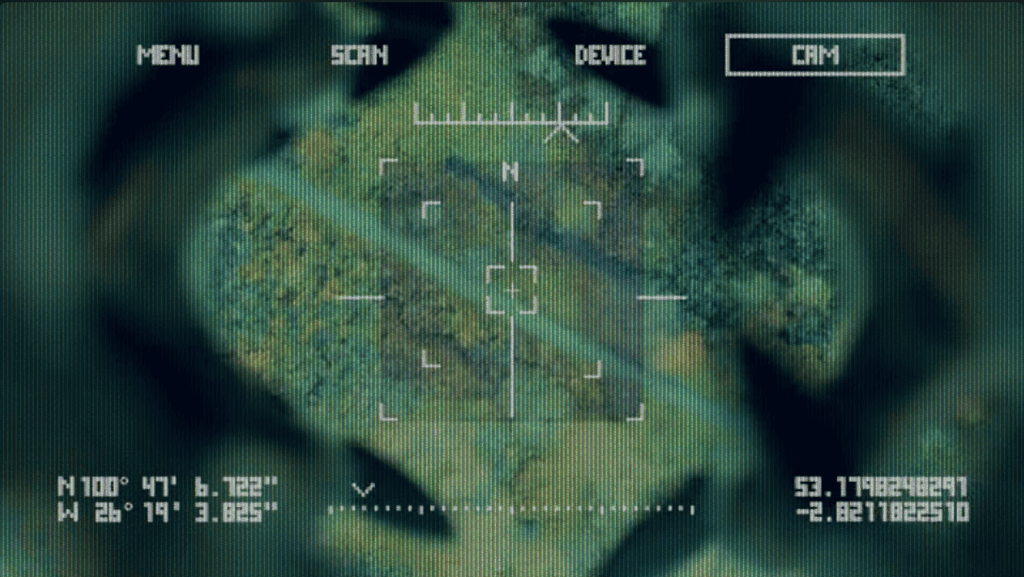

Artificial Escalation

Our fictional film depicts a world where artificial intelligence ('AI') is integrated into nuclear command, control and communications systems ('NC3') with terrifying results.

Worldbuilding Competition

The Future of Life Institute accepted entries from teams across the globe, to compete for a prize purse of up to $100,000 by designing visions of a plausible, aspirational future that includes strong artificial intelligence.

Future of Life Award

Every year, the Future of Life Award is given to one or more unsung heroes who have made a significant contribution to preserving the future of life.

Future of Life Institute Podcast

A podcast dedicated to hosting conversations with some of the world's leading thinkers and doers in the field of emerging technology and risk reduction. 140+ episodes since 2015, 4.8/5 stars on Apple Podcasts.

All our work

Our content

Featured videos

Here a few of the videos that we have produced ourselves, or in collaboration with other content creators:

Why it’s SO hard to cure cancer

1 April, 2026

The Embarrassingly Simple Reason AI Can’t Cure Cancer

25 March, 2026

How To Make AI Good For Humanity

13 March, 2026

Economist explains what happens after AI takes all jobs

26 February, 2026

AI’s first kills show we’re close to disaster. Godfather of AI.

15 January, 2026

AI expert exposes why he left OpenAI

18 December, 2025

The History of AI Explained: Crash Course Futures of AI #1

19 November, 2025

Our content

Achievements

Some of the things we have achieved

Here are a few of our proudest achievements in this area:

Celebrated 18 unsung heroes with Future of Life Awards

Every year since 2017, the Future of Life Award has celebrated the contributions of people who helped preserve the prospects of life.

See the award

Produced a viral video series raising the alarm on lethal autonomous weapons

We produced two short films, with a combined 75+ million views, depicting a world in which lethal autonomous weapons have been allowed to proliferate.

Watch the videos

Interviewed over 100+ key experts in our focus areas

Over the past 5 years, our team has hosted in-depth conversations with over 100+ leading figures in fields relating to our focus areas, all available for free on our podcast.

Listen to the podcast

Media mentions

Posts

Featured posts

Prominent Scientists, Faith Leaders, Policymakers and Artists Call for a Prohibition on Superintelligence, as Poll Shows Americans Don’t Want It

Initial signatories include AI pioneers Yoshua Bengio and Geoffrey Hinton, leading media voices Steve Bannon and Glenn Beck, Obama's National Security Advisor Susan Rice, business trailblazers Steve Wozniak and Richard Branson, five Nobel Laureates, former Irish President Mary Robinson, actors Stephen Fry and Joseph Gordon-Levitt, and hundreds of others.

27 March, 2026

Governor DeSantis Directs Florida State Agencies to Partner with Future of Life Institute to Shield Families from AI Harm

The collaboration will produce a Crisis Counselor Training Curriculum and a statewide AI Harms Reporting Form targeting dangerous AI companion applications

9 March, 2026

“This is What it Means to be Pro-Human” Declares Broad Coalition of Conservative, Progressive, and Civil Society Groups in Statement of Shared Principles on AI

Amid a rising backlash to Silicon Valley overreach, a remarkably diverse group from across the political spectrum announced a set of AI principles to clearly define the goals of the emerging pro-human movement.

4 March, 2026

Statement from Max Tegmark on the Department of War’s ultimatum

"Our safety and basic rights must not be at the mercy of a company's internal policy; lawmakers must work to codify these overwhelmingly popular red lines into law."

27 February, 2026

Future of Life Institute Launches Multimillion Dollar Nationwide AI Regulation Campaign

The Protect What’s Human campaign will push for commonsense AI safety rules at federal and state level

9 February, 2026

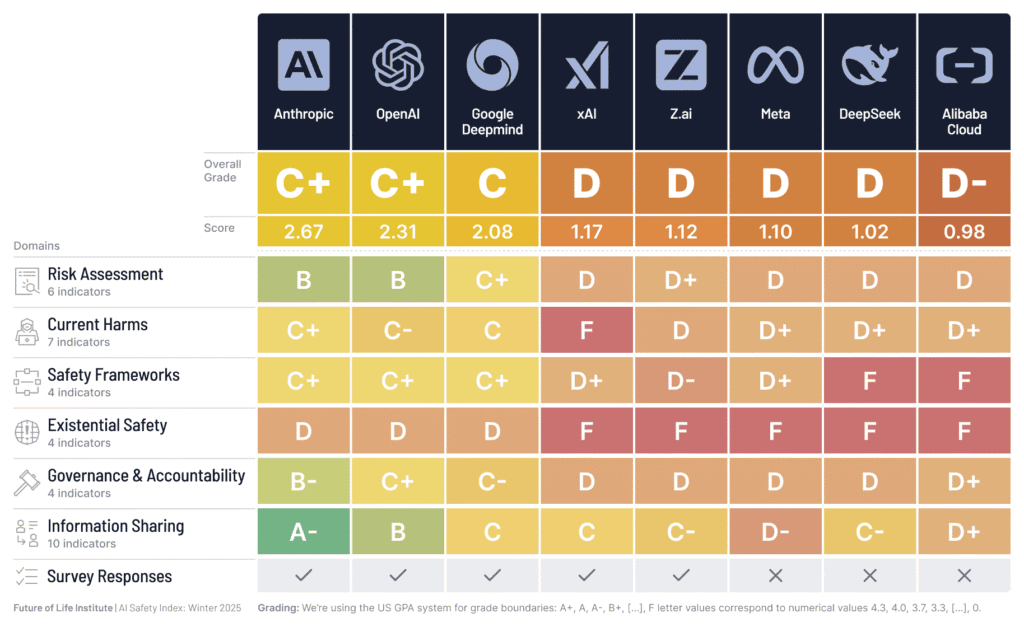

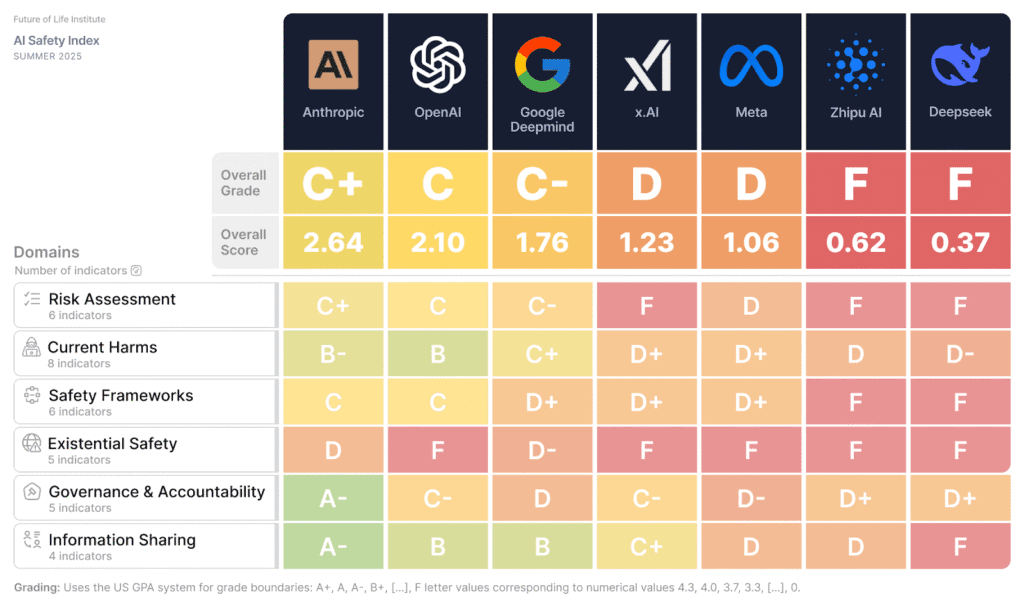

AI Company Safety Practices Fall Short of Public Commitments and Show Structural Weaknesses, as Top Performers Widen the Gap

But in a win for transparency, five leading companies participated in the scorecard's survey for the first time, providing critical new information to the public.

2 December, 2025

Google DeepMind Falls Behind OpenAI in Latest Safety Review; All AI Companies Still Falling Short, Say Experts

The Future of Life Institute’s 2025 summer update to its AI Safety Index shows some companies making incremental progress, but dangerous gaps remain in key categories such as risk assessment and controlling the systems they plan to build.

17 July, 2025

Are we close to an intelligence explosion?

AIs are inching ever-closer to a critical threshold. Beyond this threshold lie great risks—but crossing it is not inevitable.

21 March, 2025

Our content

Podcasts

Featured podcasts

2 November, 2021

Rohin Shah on the State of AGI Safety Research in 2021

1 October, 2021

Filippa Lentzos on Global Catastrophic Biological Risks

7 September, 2021

James Manyika on Global Economic and Technological Trends

22 January, 2021

Beatrice Fihn on the Total Elimination of Nuclear Weapons

All episodes

Resources

Featured resources

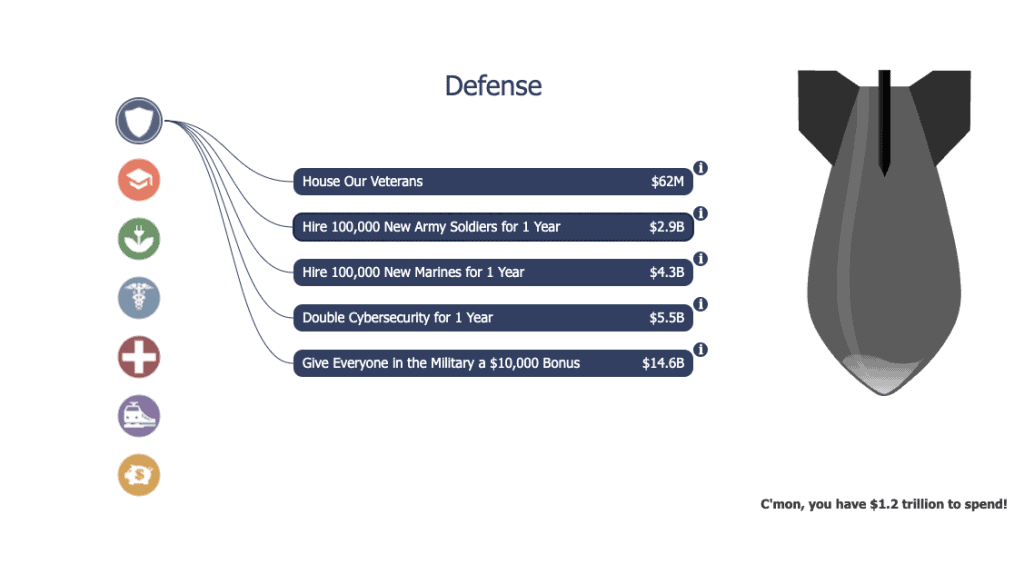

Trillion Dollar Nukes

Would you spend $1.2 trillion tax dollars on nuclear weapons? How much are nuclear weapons really worth? Is upgrading the […]

24 October, 2016

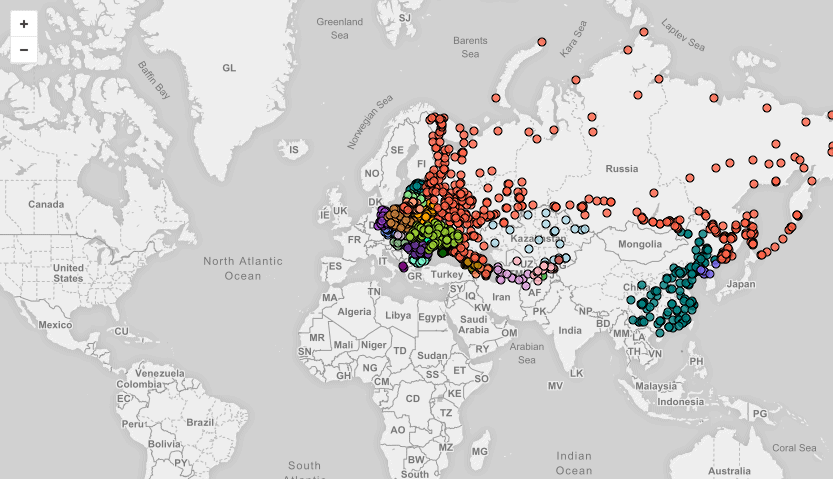

1100 Declassified U.S. Nuclear Targets

The National Security Archives recently published a declassified list of U.S. nuclear targets from 1956, which spanned 1,100 locations across Eastern Europe, Russia, China, and North Korea. The map below shows all 1,100 nuclear targets from that list, and we’ve partnered with NukeMap to demonstrate how catastrophic a nuclear exchange between the United States and Russia could be.

12 May, 2016

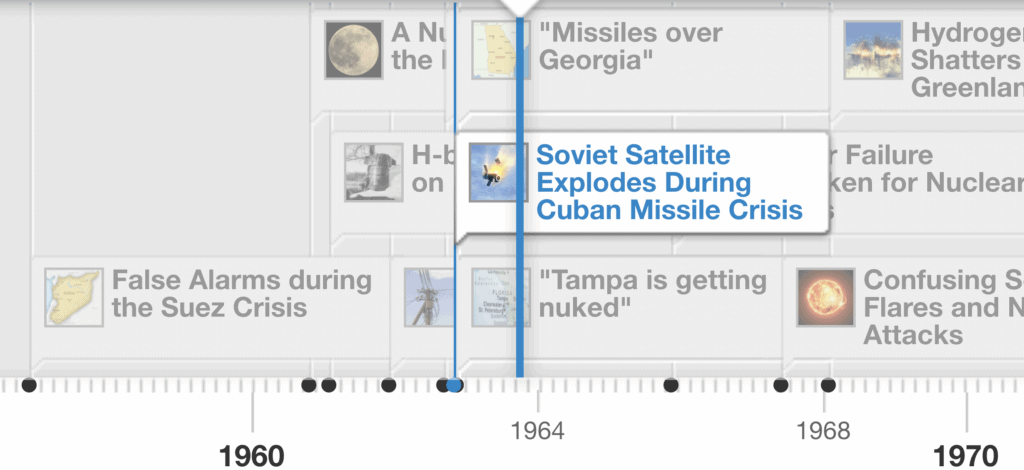

Accidental Nuclear War: a Timeline of Close Calls

The most devastating military threat arguably comes from a nuclear war started not intentionally but by accident or miscalculation. Accidental […]

23 February, 2016

Our content

Our team

Meet our outreach team

We're a 100% remote team spread all across the western hemisphere.

Ben Cumming

Chase Hardin

Gus Docker

Maggie Munro

Taylor Jones

Contact us

Let's put you in touch with the right person.

We do our best to respond to all incoming queries within three business days. Our team is spread across the globe, so please be considerate and remember that the person you are contacting may not be in your timezone.

Please direct media requests and speaking invitations for Max Tegmark to press@futureoflife.blackfin.biz. All other inquiries can be sent to contact@futureoflife.blackfin.biz.