Safe Artificial Intelligence May Start with Collaboration

Contents

Click here to see this page in other languages: Chinese ![]()

Research Culture Principle: A culture of cooperation, trust, and transparency should be fostered among researchers and developers of AI.

Competition and secrecy are just part of doing business. Even in academia, researchers often keep ideas and impending discoveries to themselves until grants or publications are finalized. But sometimes even competing companies and research labs work together. It’s not uncommon for organizations to find that it’s in their best interests to cooperate in order to solve problems and address challenges that would otherwise result in duplicated costs and wasted time.

Such friendly behavior helps groups more efficiently address regulation, come up with standards, and share best practices on safety. While such companies or research labs — whether in artificial intelligence or any other field — cooperate on certain issues, their objective is still to be the first to develop a new product or make a new discovery.

How can organizations, especially for new technologies like artificial intelligence, draw the line between working together to ensure safety and working individually to protect new ideas? Since the Research Culture Principle doesn’t differentiate between collaboration on AI safety versus AI development, it can be interpreted broadly, as seen from the responses of the AI researchers and ethicists who discussed this principle with me.

A Necessary First Step

A common theme among those I interviewed was that this Principle presented an important first step toward the development of safe and beneficial AI.

“I see this as a practical distillation of the Asilomar Principles,” said Harvard professor Joshua Greene. “They are not legally binding. At this early stage, it’s about creating a shared understanding that beneficial AI requires an active commitment to making it turn out well for everybody, which is not the default path. To ensure that this power is used well when it matures, we need to have already in place a culture, a set of norms, a set of expectations, a set of institutions that favor good outcomes. That’s what this is about — getting people together and committed to directing AI in a mutually beneficial way before anyone has a strong incentive to do otherwise.”

In fact, all of the people I interviewed agreed with the Principle. The questions and concerns they raised typically had more to do with the potential challenge of implementing it.

Susan Craw, a professor at Robert Gordon University, liked the Principle, but she wondered how it would apply to corporations.

She explained, “That would be a lovely principle to have, it can work perhaps better in universities, where there is not the same idea of competitive advantage as in industry. … And cooperation and trust among researchers … well without cooperation none of us would get anywhere, because we don’t do things in isolation. And so I suspect this idea of research culture isn’t just true of AI — you’d like it to be true of many subjects that people study.”

Meanwhile, Susan Schneider, a professor at the University of Connecticut, expressed concern about whether governments would implement the Principle.

“This is a nice ideal,” she said, “but unfortunately there may be organizations, including governments, that don’t follow principles of transparency and cooperation. … Concerning those who might resist the cultural norm of cooperation and transparency, in the domestic case, regulatory agencies may be useful.”

“Still,” she added, “it is important that we set forth the guidelines, and aim to set norms that others feel they need to follow. … Calling attention to AI safety is very important.”

“I love the sentiment of it, and I completely agree with it,” said John Havens, Executive Director of The IEEE Global Initiative for Ethical Considerations in Artificial Intelligence and Autonomous Systems.

“But,” he continued, “I think defining what a culture of cooperation, trust, and transparency is… what does that mean? Where the ethicists come into contact with the manufacturers, there is naturally going to be the potential for polarization. And on the or risk or legal compliance side, they feel that the technologists may not be thinking of certain issues. … You build that culture of cooperation, trust, and transparency when both sides say, as it were, ‘Here’s the information we really need to progress our work forward. How do we get to know what you need more, so that we can address that well with these questions?’ … This is great, but the next sentence should be: Give me a next step to make that happen.”

Uniting a Fragmented Community

Patrick Lin, a professor at California Polytechnic State University, saw a different problem, specifically within the AI community that could create challenges as they try to build trust and cooperation.

Lin explained, “I think building a cohesive culture of cooperation is going to help in a lot of things. It’s going to help accelerate research and avoid a race, but the big problem I see for the AI community is that there is no AI community, it’s fragmented, it’s a Frankenstein-stitching together of various communities. You have programmers, engineers, roboticists; you have data scientists, and it’s not even clear what a data scientist is. Are they people who work in statistics or economics, or are they engineers, are they programmers? … There’s no cohesive identity, and that’s going to be super challenging to creating a cohesive culture that cooperates and trusts and is transparent, but it is a worthy goal.”

Implementing the Principle

To address these concerns about successfully implementing a beneficial AI research culture, I turned to researchers at the Center for the Study of Existential Risks (CSER). Shahar Avin, a research associate at CSER, pointed out that the “AI research community already has quite remarkable norms when it comes to cooperation, trust and transparency, from the vibrant atmosphere at NIPS, AAAI and IJCAI, to the increasing number of research collaborations (both in terms of projects and multiple-position holders) between academia, industry and NGOs, to the rich blogging community across AI research that doesn’t shy away from calling out bad practices or norm infringements.”

Martina Kunz also highlighted the efforts by the IEEE for a global-ethics-of-AI initiative, as well as the formation of the Partnership for AI, “in particular its goal to ‘develop and share best practices’ and to ‘provide an open and inclusive platform for discussion and engagement.’”

Avin added, “The commitment of AI labs in industry to open publication is commendable, and seems to be growing into a norm that pressures historically less-open companies to open up about their research. Frankly, the demand for high-end AI research skills means researchers, either as individuals or groups, can make strong demands about their work environment, from practical matters of salary and snacks to normative matters of openness and project choice.

“The strong individualism in AI research also suggests that the way to foster cooperation on long term beneficial AI will be to discuss potential risks with researchers, both established and in training, and foster a sense of responsibility and custodianship of the future. An informed, ethically-minded and proactive research cohort, which we already see the beginnings of, would be in a position to enshrine best practices and hold up their employers and colleagues to norms of beneficial AI.”

What Do You Think?

With collaborations like the Partnership on AI forming, it’s possible we’re already seeing signs that industry and academia are starting to move in the direction of cooperation, trust, and transparency. But is that enough, or is it necessary that world governments join? Overall, how can AI companies and research labs work together to ensure they’re sharing necessary safety research without sacrificing their ideas and products?

This article is part of a series on the 23 Asilomar AI Principles. The Principles offer a framework to help artificial intelligence benefit as many people as possible. But, as AI expert Toby Walsh said of the Principles, “Of course, it’s just a start. … a work in progress.” The Principles represent the beginning of a conversation, and now we need to follow up with broad discussion about each individual principle. You can read the discussions about previous principles here.

About the Future of Life Institute

The Future of Life Institute (FLI) is the world’s oldest and largest AI think tank, with a team of 35+ full-time staff operating across the US and Europe. FLI has been working to steer the development of transformative technologies towards benefitting life and away from extreme large-scale risks since its founding in 2014. Find out more about our mission or explore our work.

Related content

Other posts about AI, AI Safety Principles, Recent News

Governor DeSantis Directs Florida State Agencies to Partner with Future of Life Institute to Shield Families from AI Harm

Statement from Max Tegmark on the Department of War’s ultimatum

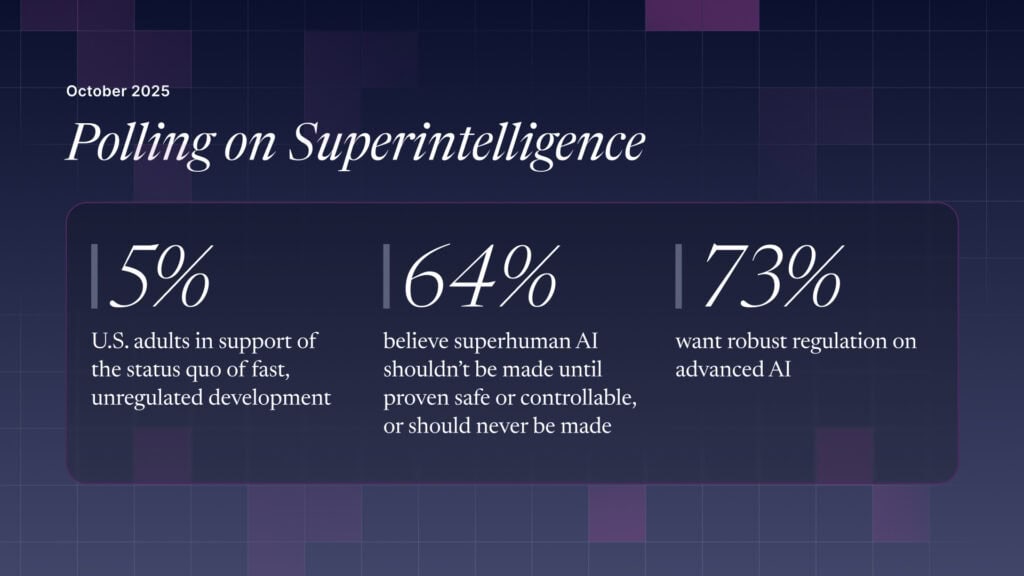

The U.S. Public Wants Regulation (or Prohibition) of Expert‑Level and Superhuman AI