European Parliament Passes Resolution Supporting a Ban on Killer Robots

Contents

Click here to see this page in other languages: Russian ![]()

The European Parliament passed a resolution on September 12, 2018 calling for an international ban on lethal autonomous weapons systems (LAWS). The resolution was adopted with 82% of the members voting in favor of it.

Among other things, the resolution calls on its Member States and the European Council “to develop and adopt, as a matter of urgency … a common position on lethal autonomous weapon systems that ensures meaningful human control over the critical functions of weapon systems, including during deployment.”

The resolution also urges Member States and the European Council “to work towards the start of international negotiations on a legally binding instrument prohibiting lethal autonomous weapons systems.”

This call for urgency comes shortly after recent United Nations talks where countries were unable to reach a consensus about whether or not to consider a ban on LAWS. Many hope that statements such as this from leading government bodies could help sway the handful of countries still holding out against banning LAWS.

Daan Kayser of PAX, one of the NGO members of the Campaign to Stop Killer Robots, said, “The voice of the European parliament is important in the international debate. At the UN talks in Geneva this past August it was clear that most European countries see the need for concrete measures. A European parliament resolution will add to the momentum toward the next step.”

The countries that took the strongest stances against a LAWS ban at the recent UN meeting were the United States, Russia, South Korea, and Israel.

Scientists’ Voices Are Heard

Also mentioned in the resolution were the many open letters signed by AI researchers and scientists from around the world, who are calling on the UN to negotiate a ban on LAWS.

Two sections of the resolution stated:

“having regard to the open letter of July 2015 signed by over 3,000 artificial intelligence and robotics researchers and that of 21 August 2017 signed by 116 founders of leading robotics and artificial intelligence companies warning about lethal autonomous weapon systems, and the letter by 240 tech organisations and 3,089 individuals pledging never to develop, produce or use lethal autonomous weapon systems,” and

“whereas in August 2017, 116 founders of leading international robotics and artificial intelligence companies sent an open letter to the UN calling on governments to ‘prevent an arms race in these weapons’ and ‘to avoid the destabilising effects of these technologies.’”

Toby Walsh, a prominent AI researcher who helped create the letters, said, “It’s great to see politicians listening to scientists and engineers. Starting in 2015, we’ve been speaking loudly about the risks posed by lethal autonomous weapons. The European Parliament has joined the calls for regulation. The challenge now is for the United Nations to respond. We have several years of talks at the UN without much to show. We cannot let a few nations hold the world hostage, to start an arms race with technologies that will destabilize the current delicate world order and that many find repugnant.”

About the Future of Life Institute

The Future of Life Institute (FLI) is the world’s oldest and largest AI think tank, with a team of 35+ full-time staff operating across the US and Europe. FLI has been working to steer the development of transformative technologies towards benefitting life and away from extreme large-scale risks since its founding in 2014. Find out more about our mission or explore our work.

Related content

Other posts about AI, Recent News

Governor DeSantis Directs Florida State Agencies to Partner with Future of Life Institute to Shield Families from AI Harm

Statement from Max Tegmark on the Department of War’s ultimatum

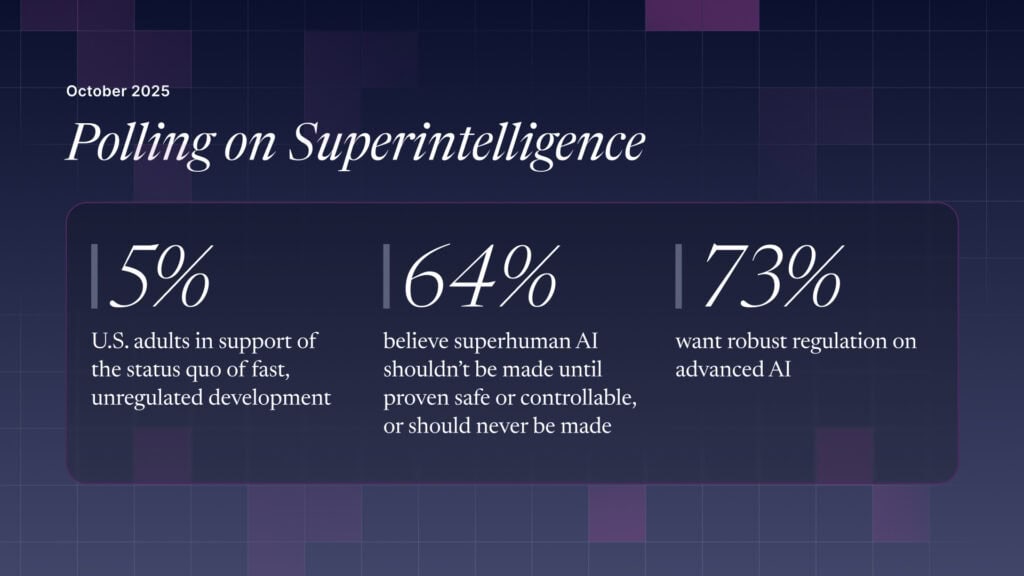

The U.S. Public Wants Regulation (or Prohibition) of Expert‑Level and Superhuman AI