Research for Beneficial Artificial Intelligence

Contents

Click here to see this page in other languages: Chinese ![]()

Research Goal: The goal of AI research should be to create not undirected intelligence, but beneficial intelligence.

It’s no coincidence that the first Asilomar Principle is about research. On the face of it, the Research Goal Principle may not seem as glamorous or exciting as some of the other Principles that more directly address how we’ll interact with AI and the impact of superintelligence. But it’s from this first Principle that all of the others are derived.

Simply put, without AI research and without specific goals by researchers, AI cannot be developed. However, participating in research and working toward broad AI goals without considering the possible long-term effects of the research could be detrimental to society.

There’s a scene in Jurassic Park, in which Jeff Goldblum’s character laments that the scientists who created the dinosaurs “were so preoccupied with whether or not they could that they didn’t stop to think if they should.” Until recently, AI researchers have also focused primarily on figuring out what they could accomplish, without longer-term considerations, and for good reason: scientists were just trying to get their AI programs to work at all, and the results were far too limited to pose any kind of threat.

But in the last few years, scientists have made great headway with artificial intelligence. The impacts of AI on society are already being felt, and as we’re seeing with some of the issues of bias and discrimination that are already popping up, this isn’t always good.

Attitude Shift

Unfortunately, there’s still a culture within AI research that’s too accepting of the idea that the developers aren’t responsible for how their products are used. Stuart Russell compares this attitude to that of civil engineers, who would never be allowed to say something like, “I just design the bridge; someone else can worry about whether it stays up.”

Joshua Greene, a psychologist from Harvard, agrees. He explains:

“I think that is a bookend to the Common Good Principle [#23] – the idea that it’s not okay to be neutral. It’s not okay to say, ‘I just make tools and someone else decides whether they’re used for good or ill.’ If you’re participating in the process of making these enormously powerful tools, you have a responsibility to do what you can to make sure that this is being pushed in a generally beneficial direction. With AI, everyone who’s involved has a responsibility to be pushing it in a positive direction, because if it’s always somebody else’s problem, that’s a recipe for letting things take the path of least resistance, which is to put the power in the hands of the already powerful so that they can become even more powerful and benefit themselves.”

What’s “Beneficial”?

Other AI experts I spoke with agreed with the general idea of the Principle, but didn’t see quite eye-to-eye on how it was worded. Patrick Lin, for example was concerned about the use of the word “beneficial” and what it meant, while John Havens appreciated the word precisely because it forces us to consider what “beneficial” means in this context.

“I generally agree with this research goal,” explained Lin, a philosopher at Cal Poly. “Given the potential of AI to be misused or abused, it’s important to have a specific positive goal in mind. I think where it might get hung up is what this word ‘beneficial’ means. If we’re directing it towards beneficial intelligence, we’ve got to define our terms; we’ve got to define what beneficial means, and that to me isn’t clear. It means different things to different people, and it’s rare that you could benefit everybody.”

Meanwhile, Havens, the Executive Director of The IEEE Global Initiative for Ethical Considerations in Artificial Intelligence and Autonomous Systems, was pleased the word forced the conversation.

“I love the word beneficial,” Havens said. “I think sometimes inherently people think that intelligence, in one sense, is always positive. Meaning, because something can be intelligent, or autonomous, and that can advance technology, that that is a ‘good thing’. Whereas the modifier ‘beneficial’ is excellent, because you have to define: What do you mean by beneficial? And then, hopefully, it gets more specific, and it’s: Who is it beneficial for? And, ultimately, what are you prioritizing? So I love the word beneficial.”

AI researcher Susan Craw, a professor at Robert Gordon University, also agrees with the Principle but questioned the order of the phrasing.

“Yes, I agree with that,” Craw said, but adds, “I think it’s a little strange the way it’s worded, because of ‘undirected.’ It might even be better the other way around, which is, it would be better to create beneficial research, because that’s a more well-defined thing.”

Long-term Research

Roman Yampolskiy, an AI researcher at the University of Louisville, brings the discussion back to the issues of most concern for FLI:

“The universe of possible intelligent agents is infinite with respect to both architectures and goals. It is not enough to simply attempt to design a capable intelligence, it is important to explicitly aim for an intelligence that is in alignment with goals of humanity. This is a very narrow target in a vast sea of possible goals and so most intelligent agents would not make a good optimizer for our values resulting in a malevolent or at least indifferent AI (which is likewise very dangerous). It is only by aligning future superintelligence with our true goals, that we can get significant benefit out of our intellectual heirs and avoid existential catastrophe.”

And with that in mind, we’re excited to announce we’ve launched a new round of grants! If you haven’t seen the Request for Proposals (RFP) yet, you can find it here. The focus of this RFP is on technical research or other projects enabling development of AI that is beneficial to society, and robust in the sense that the benefits are somewhat guaranteed: our AI systems must do what we want them to do.

If you’re a researcher interested in the field of AI, we encourage you to review the RFP and consider applying.

This article is part of a series on the 23 Asilomar AI Principles. The Principles offer a framework to help artificial intelligence benefit as many people as possible. But, as AI expert Toby Walsh said of the Principles, “Of course, it’s just a start. … a work in progress.” The Principles represent the beginning of a conversation, and now we need to follow up with broad discussion about each individual principle. You can read the discussions about previous principles here.

About the Future of Life Institute

The Future of Life Institute (FLI) is the world’s oldest and largest AI think tank, with a team of 35+ full-time staff operating across the US and Europe. FLI has been working to steer the development of transformative technologies towards benefitting life and away from extreme large-scale risks since its founding in 2014. Find out more about our mission or explore our work.

Related content

Other posts about AI, AI Safety Principles, Recent News

Governor DeSantis Directs Florida State Agencies to Partner with Future of Life Institute to Shield Families from AI Harm

Statement from Max Tegmark on the Department of War’s ultimatum

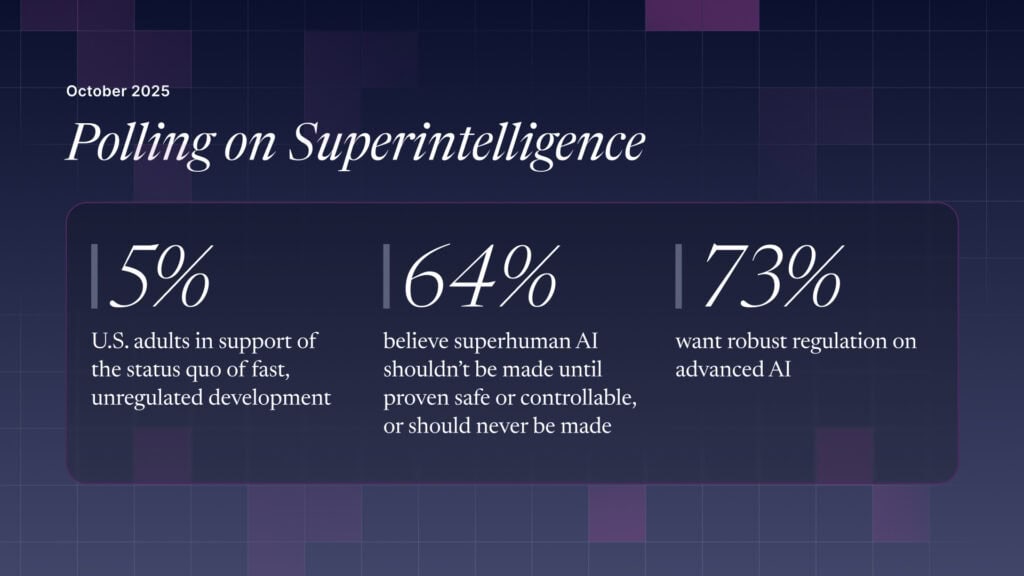

The U.S. Public Wants Regulation (or Prohibition) of Expert‑Level and Superhuman AI