The Psychology of Existential Risk: Moral Judgments about Human Extinction

Contents

By Stefan Schubert

This blog post reports on Schubert, S.**, Caviola, L.**, Faber, N. The Psychology of Existential Risk: Moral Judgments about Human Extinction. Scientific Reports [Open Access]. It was originally posted on the University of Oxford’s Practical Ethics: Ethics in the News blog.

Humanity’s ever-increasing technological powers can, if handled well, greatly improve life on Earth. But if they’re not handled well, they may instead cause our ultimate demise: human extinction. Recent years have seen an increased focus on the threat that emerging technologies such as advanced artificial intelligence could pose to humanity’s continued survival (see, e.g., Bostrom, 2014; Ord, forthcoming). A common view among these researchers is that human extinction would be much worse, morally speaking, than almost-as-severe catastrophes from which we could recover. Since humanity’s future could be very long and very good, it’s an imperative that we survive, on this view.

Do laypeople share the intuition that human extinction is much worse than near-extinction? In a famous passage in Reasons and Persons, Derek Parfit predicted that they would not. Parfit invited the reader to consider three outcomes:

1) Peace

2) A nuclear war that kills 99% of the world’s existing population.

3) A nuclear war that kills 100%.

In Parfit’s view, 3) is the worst outcome, and 1) is the best outcome. The interesting part concerns the relative differences, in terms of badness, between the three outcomes. Parfit thought that the difference between 2) and 3) is greater than the difference between 1) and 2), because of the unique badness of extinction. But he also predicted that most people would disagree with him, and instead find the difference between 1) and 2) greater.

Parfit’s hypothesis is often cited and discussed, but it hasn’t previously been tested. My colleagues Lucius Caviola and Nadira Faber and I recently undertook such testing. A preliminary study showed that most people judge human extinction to be very bad, and think that governments should invest resources to prevent it. We then turned to Parfit’s question whether they find it uniquely bad even compared to near-extinction catastrophes. We used a slightly amended version of Parfit’s thought-experiment, to remove potential confounders:

A) There is no catastrophe.

B) There is a catastrophe that immediately kills 80% of the world’s population.

C) There is a catastrophe that immediately kills 100% of the world’s population.

A large majority found the difference, in terms of badness, between A) and B) to be greater than the difference between B) and C). Thus, Parfit’s hypothesis was confirmed.

However, we also found that this judgment wasn’t particularly stable. Some participants were told, after having read about the three outcomes, that they should remember to consider their respective long-term consequences. They were reminded that it is possible to recover from a catastrophe killing 80%, but not from a catastrophe killing everyone. This mere reminder made a significantly larger number of participants find the difference between B) and C) the greater one. And still greater numbers (a clear majority) found the difference between B) and C) the greater one when the descriptions specified that the future would be extraordinarily long and good if humanity survived.

Our interpretation is that when confronted with Parfit’s question, people by default focus on the immediate harm associated with the three outcomes. Since the difference between A) and B) is greater than the difference between B) and C) in terms of immediate harm, they judge that the former difference is greater in terms of badness as well. But even relatively minor tweaks can make more people focus on the long-term consequences of the outcomes, instead of the immediate harm. And those long-term consequences become the key consideration for most people, under the hypothesis that the future will be extraordinarily long and good.

A conclusion from our studies is thus that laypeople’s views on the badness of extinction may be relatively unstable. Though such effects of relatively minor tweaks and re-framings are ubiquitous in psychology, they may be especially large when it comes to questions about human extinction and the long-term future. That may partly be because of the intrinsic difficulty of those questions, and partly because most people haven’t thought a lot about them previously.

In spite of the increased focus on existential risk and the long-term future, there has been relatively little research on how people think about those questions. There are several reasons why such research could be valuable. For instance, it might allow us to get a better sense of how much people will want to invest in safe-guarding our long-term future. It might also inform us of potential biases to correct for.

The specific issues which deserve more attention include people’s empirical estimates of whether humanity will survive and what will happen if we do, as well as their moral judgments about how valuable different possible futures (e.g., involving different population sizes and levels of well-being) would be. Another important issue is whether we think about the long term future with another frame of mind because of the great “psychological distance” (cf. Trope and Lieberman, 2010). We expect the psychology of longtermism and existential risk to be a growing field in the coming years.

** Equal contribution.

About the Future of Life Institute

The Future of Life Institute (FLI) is the world’s oldest and largest AI think tank, with a team of 35+ full-time staff operating across the US and Europe. FLI has been working to steer the development of transformative technologies towards benefitting life and away from extreme large-scale risks since its founding in 2014. Find out more about our mission or explore our work.

Related content

Other posts about Existential Risk, Recent News

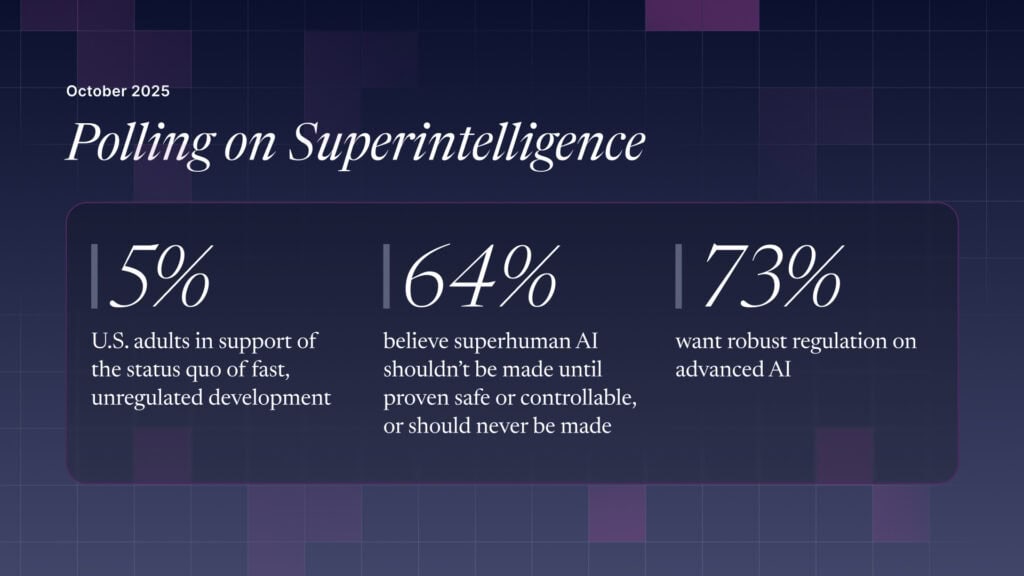

The U.S. Public Wants Regulation (or Prohibition) of Expert‑Level and Superhuman AI

Are we close to an intelligence explosion?

Could we switch off a dangerous AI?