How to Create AI That Can Safely Navigate Our World — An Interview With Andre Platzer

Contents

Over the last few decades, the unprecedented pace of technological progress has allowed us to upgrade and modernize much of our infrastructure and solve many long-standing logistical problems. For example, Babylon Health’s AI-driven smartphone app is helping assess and prioritize 1.2 million patients in North London, electronic transfers allow us to instantly send money nearly anywhere in the world, and, over the last 20 years, GPS has revolutionized how we navigate, how we track and ship goods, and how we regulate traffic.

However, exponential growth comes with its own set of hurdles that must be navigated. The foremost issue is that it’s exceedingly difficult to predict how various technologies will evolve. As a result, it becomes challenging to plan for the future and ensure that the necessary safety features are in place.

This uncertainty is particularly worrisome when it comes to technologies that could pose existential challenges — artificial intelligence, for example.

Yet, despite the unpredictable nature of tomorrow’s AI, certain challenges are foreseeable. Case in point, regardless of the developmental path that AI agents ultimately take, these systems will need to be capable of making intelligent decisions that allow them to move seamlessly and safely through our physical world. Indeed, one of the most impactful uses of artificial intelligence encompasses technologies like autonomous vehicles, robotic surgeons, user-aware smart grids, and aircraft control systems — all of which combine advanced decision-making processes with the physics of motion.

Such systems are known as cyber-physical systems (CPS). The next generation of advanced CPS could lead us into a new era in safety, reducing crashes by 90% and saving the world’s nations hundreds of billions of dollars a year — but only if such systems are themselves implemented correctly.

This is where Andre Platzer, Associate Professor of Computer Science at Carnegie Mellon University, comes in. Platzer’s research is dedicated to ensuring that CPS benefit humanity and don’t cause harm. Practically speaking, this means ensuring that the systems are flexible, reliable, and predictable.

What Does it Mean to Have a Safe System?

Cyber-physical systems have been around, in one form or another, for quite some time. Air traffic control systems, for example, have long relied on CPS-type technology for collision avoidance, traffic management, and a host of other decision-making tasks. However, Platzer notes that as CPS continue to advance, and as they are increasingly required to integrate more complicated automation and learning technologies, it becomes far more difficult to ensure that CPS are making reliable and safe decisions.

To better clarify the nature of the problem, Platzer turns to self-driving vehicles. In advanced systems like these, he notes that we need to ensure that the technology is sophisticated enough to be flexible, as it has to be able to safely respond to any scenario that it confronts. In this sense, “CPS are at their best if they’re not just running very simple , but if they’re running much more sophisticated and advanced systems,” Platzer notes. However, when CPS utilize advanced autonomy, because they are so complex, it becomes far more difficult to prove that they are making systematically sound choices.

In this respect, the more sophisticated the system becomes, the more we are forced to sacrifice some of the predictability and, consequently, the safety of the system. As Platzer articulates, “the simplicity that gives you predictability on the safety side is somewhat at odds with the flexibility that you need to have on the artificial intelligence side.”

The ultimate goal, then, is to find equilibrium between the flexibility and predictability — between the advanced learning technology and the proof of safety — to ensure that CPS can execute their tasks both safely and effectively. Platzer describes this overall objective as a kind of balancing act, noting that, “with cyber-physical systems, in order to make that sophistication feasible and scalable, it’s also important to keep the system as simple as possible.”

How to Make a System Safe

The first step in navigating this issue is to determine how researchers can verify that a CPS is truly safe. In this respect, Platzer notes that his research is driven by this central question: if scientists have a mathematical model for the behavior of something like a self-driving car or an aircraft, and if they have the conviction that all the behaviors of the controller are safe, how do they go about proving that this is actually the case?

The answer is an automated theorem prover, which is a computer program that assists with the development of rigorous mathematical correctness proofs.

When it comes to CPS, the highest safety standard is such a mathematical correctness proof, which shows that the system always produces the correct output for any given input. It does this by using formal methods of mathematics to prove or disprove the correctness of the control algorithms underlying a system.

After this proof technology has been identified and created, Platzer asserts that the next step is to use it to augment the capabilities of artificially intelligent learning agents — increasing their complexity while simultaneously verifying their safety.

Eventually, Platzer hopes that this will culminate in technology that allows CPS to recover from situations where the expected outcome didn’t turn out to be an accurate model of reality. For example, if a self-driving car assumes another car is speeding up when it is actually slowing down, it needs to be able to quickly correct this error and switch to the correct mathematical model of reality.

The more complex such seamless transitions are, the more complex they are to implement. But they are the ultimate amalgamation of safety and flexibility or, in other words, the ultimately combination of AI and safety proof technology.

Creating the Tech of Tomorrow

To date, one of the biggest developments to come from Platzer’s research is the KeYmaera X prover, which Platzer characterizes as a “gigantic, quantum leap in terms of the reliability of our safety technology, passing far beyond in rigor than what anyone else is doing for the analysis of cyber-physical systems.”

The KeYmaera X prover, which was created by Platzer and his team, is a tool that allows users to easily and reliably construct mathematical correctness proofs for CPS through an easy-to-use interface.

More technically, KeYmaera X is a hybrid systems theorem prover that analyzes the control program and the physical behavior of the controlled system together, in order to provide both efficient computation and the necessary support for sophisticated safety proof techniques. Ultimately, this work builds off of a previous iteration of the technology known as KeYmaera. However, Platzer states that, in order to optimize the tool and make it as simple as possible, the team essentially “started from scratch.”

Emphasizing just how dramatic these most recent changes are, Platzer notes that, in the previous prover, the correctness of the statements was dependent on some 66,000 lines of code. Notably, each of these 66,000 lines were all critical to the correctness of the verdict. According to Platzer, this poses a problem, as it’s exceedingly difficult to ensure that all of the lines are implemented correctly. Although the latest iteration of KeYmaera is ultimately just as large as the previous version, in KeYmaera X, the part of the prover that is responsible for verifying the correctness is a mere 2,000 lines of code.

This allows the team to evaluate the safety of cyber-physical systems more reliably than ever before. “We identified this microkernel, this really minuscule part of the system that was responsible for the correctness of the answers, so now we have a much better chance of making sure that we haven’t accidentally snuck any mistakes into the reasoning engines,” Platzer said. Simultaneously, he notes that it enables users to do much more aggressive automation in their analysis. Platzer explains, “If you have a small part of the system that’s responsible for the correctness, then you can do much more liberal automation. It can be much more courageous because there’s an entire safety net underneath it.”

For the next stage of his research, Platzer is going to begin integrating multiple mathematical models that could potentially describe reality into a CPS. To explain these next steps, Platzer returns once more to self-driving cars: “If you’re following another driver, you can’t know if the driver is currently looking for a parking spot, trying to get somewhere quickly, or about to change lanes. So, in principle, under those circumstances, it’s a good idea to have multiple possible models and comply with the ones that may be the best possible explanation of reality.”

Ultimately, the goal is to allow the CPS to increase their flexibility and complexity by switching between these multiple models as they become more or less likely explanations of reality. “The world is a complicated place,” Platzer explains, “so the safety analysis of the world will also have to be a complicated one.”

About the Future of Life Institute

The Future of Life Institute (FLI) is the world’s oldest and largest AI think tank, with a team of 35+ full-time staff operating across the US and Europe. FLI has been working to steer the development of transformative technologies towards benefitting life and away from extreme large-scale risks since its founding in 2014. Find out more about our mission or explore our work.

Related content

Other posts about AI, AI Research, Grants Program, Recent News

Governor DeSantis Directs Florida State Agencies to Partner with Future of Life Institute to Shield Families from AI Harm

Statement from Max Tegmark on the Department of War’s ultimatum

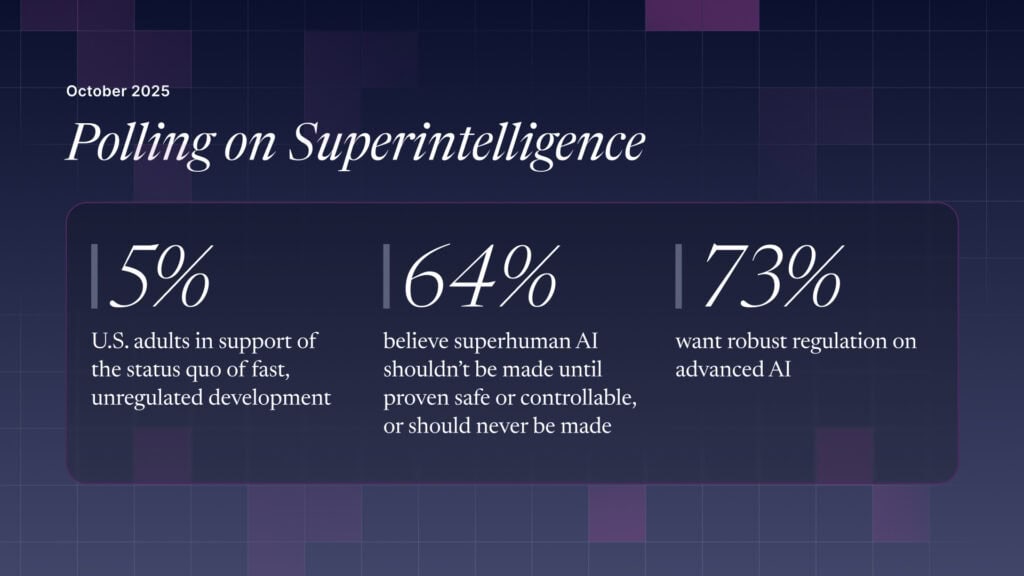

The U.S. Public Wants Regulation (or Prohibition) of Expert‑Level and Superhuman AI